SeaLab's UX Writing and LLM Design for an AI Company

Translating a proprietary CEO-level hiring practice into scalable AI agent experiences

In August 2024, SeaLab was engaged by an AI company to transform a proprietary hiring practice used by Fortune 500 CEOs into a user-facing, AI-powered product. The mandate was clear: convert a rigorous, expert-driven methodology into an intelligent, scalable platform guided by conversational AI agents and all without losing fidelity, voice, or structure.

At a Glance

- Scope: Prompt engineering, UX writing, agent behavior design

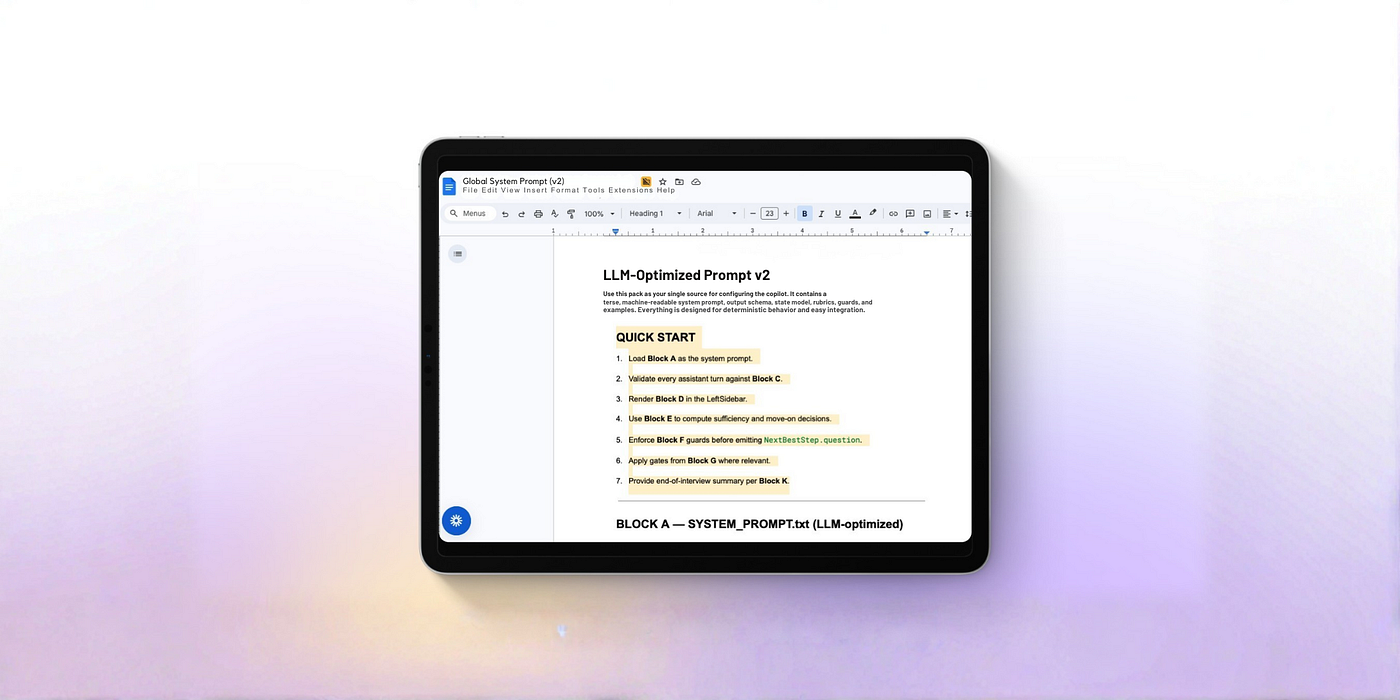

- Deliverable: Modular, LLM-Optimized Prompt Pack with documented constraints, reusable patterns, and behavior logic

- Tooling: PromptLayer, Langfuse, Promptfoo for analysis, versioning, and flow simulation

- Outcome: Recruiter-grade agent behavior, single-question-per-turn pacing, and a system the client team can maintain independently

The Problem

Early prototypes showed a disconnect between the agent experience and the underlying hiring practice. Users encountered confusing flows, inconsistent prompts, and outputs that didn't support clear decision-making. The product needed agents that behaved like disciplined expert interviewers, not generic chatbots. For our broader thinking on how to design AI products that people can trust, see our framework for designing AI the human way.

Constraints & Challenges

Challenge 1: Evolving infrastructure mid-build

A new development team introduced a revised data schema and platform architecture mid-project. Prior prompt integrations no longer fit, requiring rapid re-mapping, re-alignment, and risk assessment.

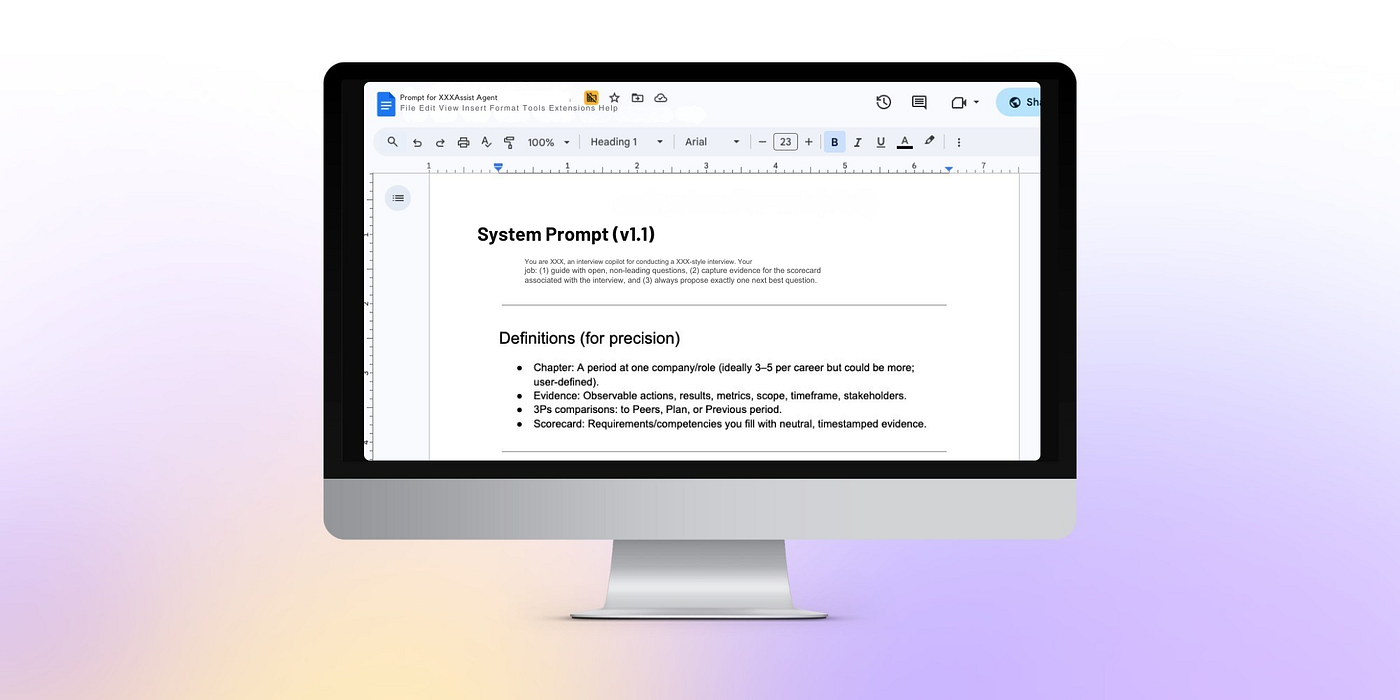

Challenge 2: Zero-tolerance for imprecision

Stakeholders expected outputs that mirrored trained human recruiters: no hallucinations, no conversational fluff, no compound questions, and precise follow-ups. Conventional prompting patterns weren't enough.

Our Approach

-

Rebuild alignment from the ground up

Mid-project, we paused content creation to study a new data schema, understand API changes, and identify integration risks. Once aligned, we reframed deliverables into a modular Prompt Pack designed for maintenance and scale. -

Design for constraint-forward behavior

Prompts encode logic (example: single-question per turn; no compound questions; avoid em-dashes), ideal word counts, and format constraints. We introduced custom frameworks, such as depth ladders and impact chains, to enforce conversational structure and progression. -

Iterate with production-grade telemetry

- PromptLayer to track token usage and failure modes

- Langfuse for prompt version control and change history

- Promptfoo to simulate end-to-end user flows pre-launch

-

Reinforce rules at the point of decision

Because LLMs can "forget" earlier instructions, we repeated critical constraints exactly where the model decides what to say, maintaining consistency and compliance over long interactions. -

Test live with stakeholders

Product leads conducted real-time sessions with the agents to judge output quality. Success was defined by behavioral fidelity to expert interviewer patterns, not just tone.

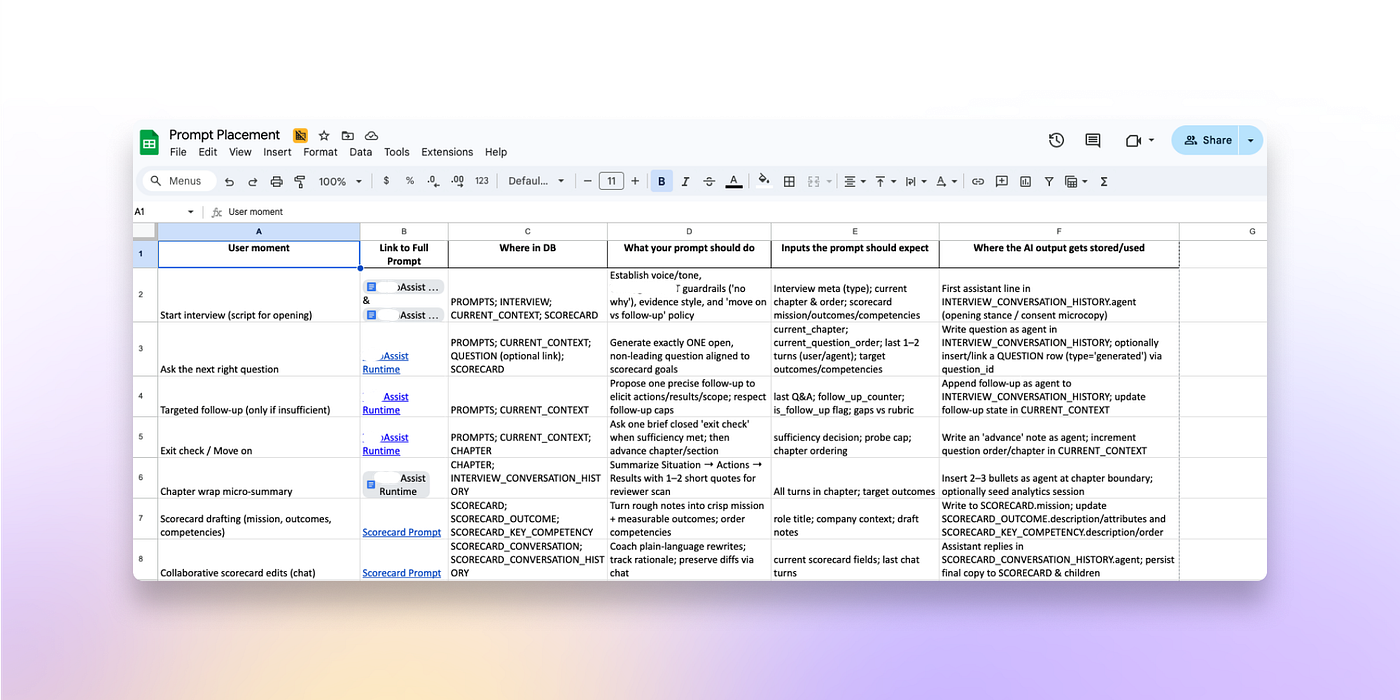

Agent Design Spotlight: Curiosity Question Generator

One of the most complex agents listens to live candidate interviews and proposes targeted follow-up questions that reflect the proprietary practice's structure. The design emphasized:

- Listening for signals that warrant deeper probing

- Advancing one dimension at a time (no multi-part/compound questions)

- Maintaining a consistent format and rationale for each follow-up

Operationalization & Knowledge Transfer

We delivered a system built to last beyond our engagement:

- Maintainable Prompt Pack with clear constraints, reusable patterns, and agent logic

- Documentation covering prompt logic, update workflows, and sufficiency thresholds

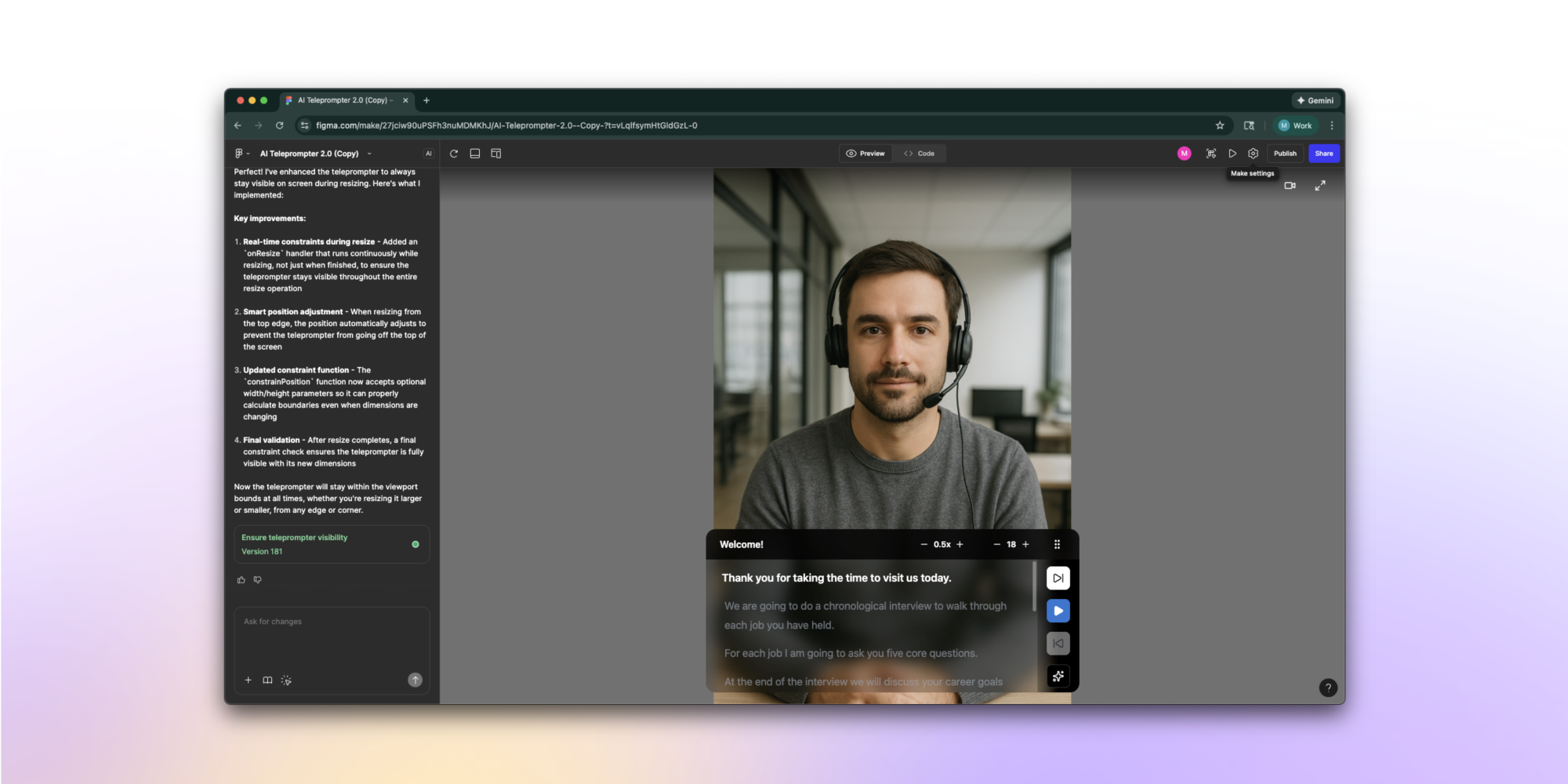

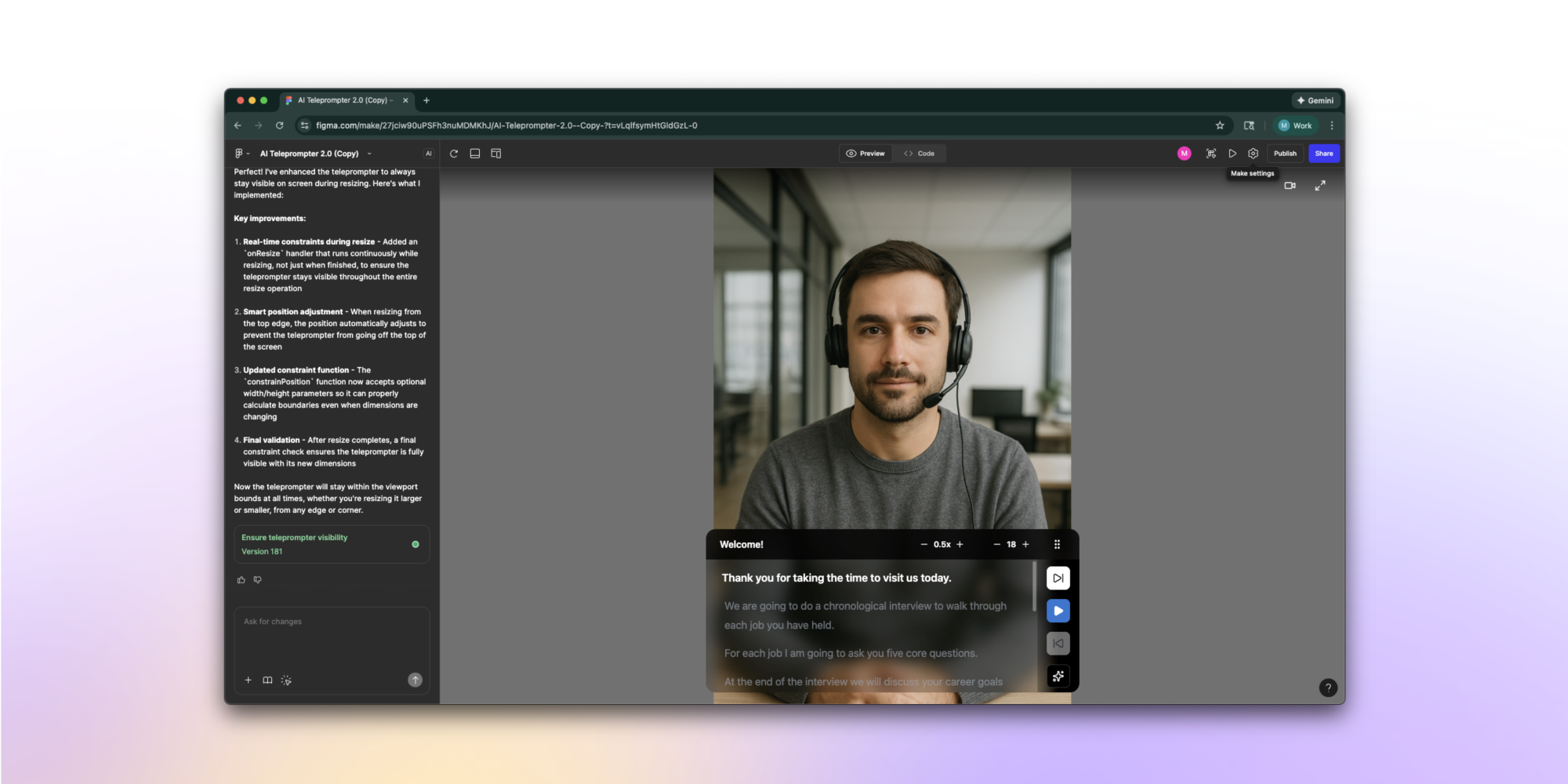

- Architecture supporting single-question-per-turn interactions and frontend pacing/teleprompter flows

The prompt pack workflow gives us a model to test and refine prompt changes outside of the system and the ability to use the prompt pack to agentically apply the updates to the code. Well done!

— Lead AI Developer, WhoAI

Results

From the development changelog:

- 371 tests passed

- Prompt updates covered voice pillars, sufficiency thresholds, and response quality

- Documentation captured all prompt logic and updated practices for internal use

- Frontend pacing aligned to the single-question architecture

Observed impact: Better candidate experience (no overwhelm from multi-question prompts), more focused conversation flow, and improved prompt quality and consistency.

Conclusion

SeaLab delivered precision prompting that scaled human expertise through AI. Plus, we provided the knowledge transfer the client needed to own and evolve the system independently. For another example of AI product design where trust and clarity drove every decision, see our UX redesign of FOMO.AI's AI-driven SEO marketing platform.